訓練自己的模型

- 一、建立訓練集和測試集

-

- 1.建立images檔案夾

- 2.使用labelImg标注工具進行标注

- 二、建立自己的資料集

-

- 1.将.xml檔案轉換成.csv檔案

- 2.将csv檔案轉化為TFRecord檔案

- 三、下載下傳預訓練模型和配置檔案

-

- 1.預訓練模型下載下傳

- 2.配置檔案

- 3.建立标簽檔案

- 四、訓練

- 五、可能遇到的問題

-

- 1.顯存不夠

- 參考部落格:

一、建立訓練集和測試集

1.建立images檔案夾

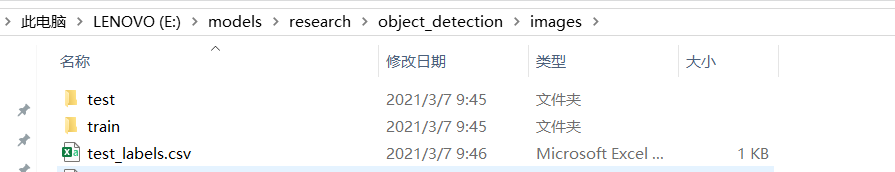

在object_detection目錄下建立images檔案夾,在images檔案夾下建立test和train檔案夾。

2.使用labelImg标注工具進行标注

下載下傳labelImg,下載下傳位址 。下載下傳完成後解壓。打開anaconda prompt cd到剛剛解壓目錄下E:\labelImg\labelImg-master。然後安裝pyqt,安裝指令:

安裝完成後執行:

pyrcc5 -o resources.py resources.qrc

不傳回任何結果說明沒有出錯

最後輸入python labelImg.py打開labelImg标注工具

在data檔案夾下有一個predefined_classes.txt裡面包含類别可以修改

快捷鍵:

Ctrl + s 儲存

Ctrl + d 複制目前标簽和矩形框

space 将目前圖像标記為已驗證

w 建立一個矩形框

d 下一張圖檔

a 上一張圖檔

del 删除標明的矩形框

Ctrl++ 放大

Ctrl-- 縮小

↑→↓← 鍵盤箭頭移動標明的矩形框

二、建立自己的資料集

1.将.xml檔案轉換成.csv檔案

标注儲存完成後會自動生成一個和圖檔同名的xml檔案,我們要把xml檔案轉換成csv檔案,腳本如下:

import os

import glob

import pandas as pd

import xml.etree.ElementTree as ET

def xml_to_csv(path):

xml_list = []

for xml_file in glob.glob(path + '/*.xml'):

tree = ET.parse(xml_file)

root = tree.getroot()

for member in root.findall('object'):

value = (root.find('filename').text,

int(root.find('size')[0].text),

int(root.find('size')[1].text),

member[0].text,

int(member[4][0].text),

int(member[4][1].text),

int(member[4][2].text),

int(member[4][3].text)

)

xml_list.append(value)

column_name = ['filename', 'width', 'height', 'class', 'xmin', 'ymin', 'xmax', 'ymax']

xml_df = pd.DataFrame(xml_list, columns=column_name)

return xml_df

def main():

for folder in ['train','test']:

image_path = os.path.join(os.getcwd(), ('images/' + folder)) #這裡就是需要通路的.xml的存放位址

xml_df = xml_to_csv(image_path) # object_detection/images/train or test

xml_df.to_csv(('images/' + folder + '_labels.csv'), index=None)

print('Successfully converted xml to csv.')

main()

把上面代碼命名為xml_to_csv.py放在object_detection檔案夾下面,cd到object_detection輸入python xml_to_csv.py 将在images檔案夾下産生兩個.csv檔案,分别為train_labels.csv和test_labels.csv

2.将csv檔案轉化為TFRecord檔案

"""

Usage:

# From tensorflow/models/

# Create train data:

python generate_tfrecord.py --csv_input=images/train_labels.csv --image_dir=images/train --output_path=train.record

# Create test data:

python generate_tfrecord.py --csv_input=images/test_labels.csv --image_dir=images/test --output_path=test.record

"""

from __future__ import division

from __future__ import print_function

from __future__ import absolute_import

import os

import io

import pandas as pd

import tensorflow as tf

from PIL import Image

from object_detection.utils import dataset_util

from collections import namedtuple, OrderedDict

flags = tf.app.flags

flags.DEFINE_string('csv_input', '', 'Path to the CSV input')

flags.DEFINE_string('image_dir', '', 'Path to the image directory')

flags.DEFINE_string('output_path', '', 'Path to output TFRecord')

FLAGS = flags.FLAGS

# M1,this code part need to be modified according to your real situation修改類别

def class_text_to_int(row_label):

if row_label == 'cup':

return 1

elif row_label == 'book':

return 2

else:

None

def split(df, group):

data = namedtuple('data', ['filename', 'object'])

gb = df.groupby(group)

return [data(filename, gb.get_group(x)) for filename, x in zip(gb.groups.keys(), gb.groups)]

def create_tf_example(group, path):

with tf.gfile.GFile(os.path.join(path, '{}'.format(group.filename)), 'rb') as fid:

encoded_jpg = fid.read()

encoded_jpg_io = io.BytesIO(encoded_jpg)

image = Image.open(encoded_jpg_io)

width, height = image.size

filename = group.filename.encode('utf8')

image_format = b'jpg'

xmins = []

xmaxs = []

ymins = []

ymaxs = []

classes_text = []

classes = []

for index, row in group.object.iterrows():

xmins.append(row['xmin'] / width)

xmaxs.append(row['xmax'] / width)

ymins.append(row['ymin'] / height)

ymaxs.append(row['ymax'] / height)

classes_text.append(row['class'].encode('utf8'))

classes.append(class_text_to_int(row['class']))

tf_example = tf.train.Example(features=tf.train.Features(feature={

'image/height': dataset_util.int64_feature(height),

'image/width': dataset_util.int64_feature(width),

'image/filename': dataset_util.bytes_feature(filename),

'image/source_id': dataset_util.bytes_feature(filename),

'image/encoded': dataset_util.bytes_feature(encoded_jpg),

'image/format': dataset_util.bytes_feature(image_format),

'image/object/bbox/xmin': dataset_util.float_list_feature(xmins),

'image/object/bbox/xmax': dataset_util.float_list_feature(xmaxs),

'image/object/bbox/ymin': dataset_util.float_list_feature(ymins),

'image/object/bbox/ymax': dataset_util.float_list_feature(ymaxs),

'image/object/class/text': dataset_util.bytes_list_feature(classes_text),

'image/object/class/label': dataset_util.int64_list_feature(classes),

}))

return tf_example

def main(_):

writer = tf.python_io.TFRecordWriter(FLAGS.output_path)

path = os.path.join(os.getcwd(), FLAGS.image_dir)

examples = pd.read_csv(FLAGS.csv_input)

grouped = split(examples, 'filename')

for group in grouped:

tf_example = create_tf_example(group, path)

writer.write(tf_example.SerializeToString())

writer.close()

output_path = os.path.join(os.getcwd(), FLAGS.output_path)

print('Successfully created the TFRecords: {}'.format(output_path))

if __name__ == '__main__':

tf.app.run()

上面的代碼命名為generate_tfrecord.py放在object_detection檔案夾下面,然後在此檔案夾下運作

python generate_tfrecord.py --csv_input=images/train_labels.csv --image_dir=images/train --output_path=train.record

python generate_tfrecord.py --csv_input=images/test_labels.csv --image_dir=images/test --output_path=test.record

三、下載下傳預訓練模型和配置檔案

1.預訓練模型下載下傳

參考部落格:https://blog.csdn.net/KyrieHe/article/details/79814790

下載下傳到object_detection檔案夾下并解壓,這裡選用ssd_mobilenet_v1_coco速度最快

2.配置檔案

自己在object_detection檔案夾下建立training檔案夾,把配置檔案放進去

# SSD with Mobilenet v1 configuration for MSCOCO Dataset.

# Users should configure the fine_tune_checkpoint field in the train config as

# well as the label_map_path and input_path fields in the train_input_reader and

# eval_input_reader. Search for "PATH_TO_BE_CONFIGURED" to find the fields that

# should be configured.

model {

ssd {

num_classes: 1#類别數目根據實際進行修改

box_coder {

faster_rcnn_box_coder {

y_scale: 10.0

x_scale: 10.0

height_scale: 5.0

width_scale: 5.0

}

}

matcher {

argmax_matcher {

matched_threshold: 0.5

unmatched_threshold: 0.5

ignore_thresholds: false

negatives_lower_than_unmatched: true

force_match_for_each_row: true

}

}

similarity_calculator {

iou_similarity {

}

}

anchor_generator {

ssd_anchor_generator {

num_layers: 6

min_scale: 0.2

max_scale: 0.95

aspect_ratios: 1.0

aspect_ratios: 2.0

aspect_ratios: 0.5

aspect_ratios: 3.0

aspect_ratios: 0.3333

}

}

image_resizer {

fixed_shape_resizer {

height: 300

width: 300

}

}

box_predictor {

convolutional_box_predictor {

min_depth: 0

max_depth: 0

num_layers_before_predictor: 0

use_dropout: false

dropout_keep_probability: 0.8

kernel_size: 1

box_code_size: 4

apply_sigmoid_to_scores: false

conv_hyperparams {

activation: RELU_6,

regularizer {

l2_regularizer {

weight: 0.00004

}

}

initializer {

truncated_normal_initializer {

stddev: 0.03

mean: 0.0

}

}

batch_norm {

train: true,

scale: true,

center: true,

decay: 0.9997,

epsilon: 0.001,

}

}

}

}

feature_extractor {

type: 'ssd_mobilenet_v1'

min_depth: 16

depth_multiplier: 1.0

conv_hyperparams {

activation: RELU_6,

regularizer {

l2_regularizer {

weight: 0.00004

}

}

initializer {

truncated_normal_initializer {

stddev: 0.03

mean: 0.0

}

}

batch_norm {

train: true,

scale: true,

center: true,

decay: 0.9997,

epsilon: 0.001,

}

}

}

loss {

classification_loss {

weighted_sigmoid {

}

}

localization_loss {

weighted_smooth_l1 {

}

}

hard_example_miner {

num_hard_examples: 3000

iou_threshold: 0.99

loss_type: CLASSIFICATION

max_negatives_per_positive: 3

min_negatives_per_image: 0

}

classification_weight: 1.0

localization_weight: 1.0

}

normalize_loss_by_num_matches: true

post_processing {

batch_non_max_suppression {

score_threshold: 1e-8

iou_threshold: 0.6

max_detections_per_class: 100

max_total_detections: 100

}

score_converter: SIGMOID

}

}

}

############################################################

train_config: {

batch_size: 2#不要太大

optimizer {

rms_prop_optimizer: {

learning_rate: {

exponential_decay_learning_rate {

initial_learning_rate: 0.004

decay_steps: 800720

decay_factor: 0.95

}

}

momentum_optimizer_value: 0.9

decay: 0.9

epsilon: 1.0

}

}

#fine_tune_checkpoint: "PATH_TO_BE_CONFIGURED/model.ckpt" #可以注釋掉

#from_detection_checkpoint: true #注釋掉

# Note: The below line limits the training process to 200K steps, which we

# empirically found to be sufficient enough to train the pets dataset. This

# effectively bypasses the learning rate schedule (the learning rate will

# never decay). Remove the below line to train indefinitely.

num_steps: 200000

data_augmentation_options {

random_horizontal_flip {

}

}

data_augmentation_options {

ssd_random_crop {

}

}

}

train_input_reader: {

tf_record_input_reader {

input_path: "data/train.record" #訓練集所在位置

}

label_map_path: "data/cup.pbtxt" #标簽所在位址

}

eval_config: {

num_examples: 9 #驗證集數量

# Note: The below line limits the evaluation process to 10 evaluations.

# Remove the below line to evaluate indefinitely.

max_evals: 10

}

eval_input_reader: {

tf_record_input_reader {

input_path: "data/test.record" #驗證集所在位址

}

label_map_path: "data/cup.pbtxt" #标簽

shuffle: false

num_readers: 1

}

3.建立标簽檔案

在data檔案夾下面建立cup.pbtxt

item {

id: 1

name: 'cup'

}

四、訓練

在object_detection下面運作

python train.py --logtostderr --train_dir=training/ --pipeline_config_path=training/ssd_mobilenet_v1_coco.config

出現下面界面,說明訓練開始了。

五、可能遇到的問題

1.顯存不夠

可能是自己拍的圖檔太大的問題,我剛開始拍的圖檔一張2MB,顯示顯存不夠。我們可以用如下方法調節圖檔大小:

右擊圖檔,點選編輯,選擇重新調整大小,

改一下數值就能改變大小了

參考部落格:

https://blog.csdn.net/dy_guox/article/details/79111949

https://blog.csdn.net/csdn_6105/article/details/82933628