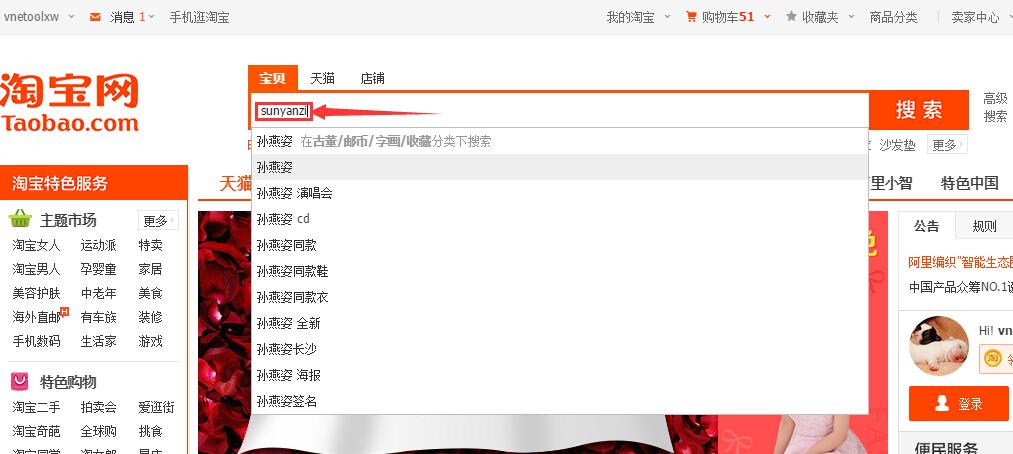

今天来说说拼音检索,这个功能其实还是用来提升用户体验的,别的不说,最起码避免了用户切换输入法,如果能支持中文汉语拼音简拼,那用户搜索时输入的字符更简便了,用户输入次数少了就是为了给用户使用时带来便利。来看看一些拼音搜索的经典案例:

看了上面几张图的功能演示,我想大家也应该知道了拼音检索的作用以及为什么要使用拼音检索了。那接下来就来说说如何实现:

接下来要做的就是要把转换得到的拼音进行ngram处理,比如:王杰的汉语拼音是wangjie,如果要用户完整正确的输入wangjie才能搜到有关“王杰”的结果,那未免有点在考用户的汉语拼音基础知识,万一用户前鼻音和后鼻音不分怎么办,所以我们需要考虑前缀查询或模糊匹配,即用户只需要输入wan就能匹配到"王"字,这样做的目的其实还是为了减少用户操作步骤,用最少的操作步骤达到同样的目的,那必然是最讨人喜欢的。再比如“孙燕姿”汉语拼音是“sunyanzi”,如果我期望输入“yanz”也能搜到呢?这时候ngram就起作用啦,我们可以对“sunyanzi”进行ngram处理,假如ngram按2-4个长度进行切分,那得到的结果就是:su un ny

ya an nz zi sun uny nya yan anz nzi suny unya nyan yanz anzi,这样用户输入yanz就能搜到了。但ngram只适合用户输入的搜索关键字比较短的情况下,因为如果用户输入的搜索关键字全是汉字且长度为20-30个,再转换为拼音,个数又要翻个5-6倍,再进行ngram又差不多翻了个10倍甚至更多,因为我们都知道booleanquery最多只能链接1024个query,所以你懂的。 分出来的gram段会通过chartermattribute记录在原始term的相同位置,跟同义词实现原理差不多。所以拼音搜索至关重要的是分词,即在分词阶段就把拼音进行ngram处理然后当作同义词存入chartermattribute中(这无疑也会增加索引体积,索引体积增大除了会额外多占点硬盘空间外,还会对索引重建性能以及搜索性能有所影响),搜索阶段跟普通查询没什么区别。如果你不想因为ngram后term数量太多影响搜索性能,你可以试试edgengramtokenfilter进行前缀ngram,即ngram时永远从第一个字符开始切分,比如sunyanzi,按2-8个长度进行edgengramtokenfilter处理后结果就是:su sun suny sunya sunyan sunyanz sunyanzi。这样处理可以减少term数量,但弊端就是你输入yanzi就没法搜索到了(匹配粒度变粗了,没有ngram匹配粒度精确),你懂的。

下面给出一个拼音搜索的示例程序,代码如下:

package com.yida.framework.lucene5.pinyin;

import java.io.ioexception;

import net.sourceforge.pinyin4j.pinyinhelper;

import net.sourceforge.pinyin4j.format.hanyupinyincasetype;

import net.sourceforge.pinyin4j.format.hanyupinyinoutputformat;

import net.sourceforge.pinyin4j.format.hanyupinyintonetype;

import net.sourceforge.pinyin4j.format.exception.badhanyupinyinoutputformatcombination;

import org.apache.lucene.analysis.tokenfilter;

import org.apache.lucene.analysis.tokenstream;

import org.apache.lucene.analysis.tokenattributes.chartermattribute;

/**

* 拼音过滤器[负责将汉字转换为拼音]

* @author lanxiaowei

*

*/

public class pinyintokenfilter extends tokenfilter {

private final chartermattribute termatt;

/**汉语拼音输出转换器[基于pinyin4j]*/

private hanyupinyinoutputformat outputformat;

/**对于多音字会有多个拼音,firstchar即表示只取第一个,否则会取多个拼音*/

private boolean firstchar;

/**term最小长度[小于这个最小长度的不进行拼音转换]*/

private int mintermlength;

private char[] curtermbuffer;

private int curtermlength;

private boolean outchinese;

public pinyintokenfilter(tokenstream input) {

this(input, constant.default_first_char, constant.default_min_term_lrngth);

}

public pinyintokenfilter(tokenstream input, boolean firstchar) {

this(input, firstchar, constant.default_min_term_lrngth);

public pinyintokenfilter(tokenstream input, boolean firstchar,

int mintermlenght) {

this(input, firstchar, mintermlenght, constant.default_ngram_chinese);

int mintermlenght, boolean outchinese) {

super(input);

this.termatt = ((chartermattribute) addattribute(chartermattribute.class));

this.outputformat = new hanyupinyinoutputformat();

this.firstchar = false;

this.mintermlength = constant.default_min_term_lrngth;

this.outchinese = constant.default_out_chinese;

this.firstchar = firstchar;

this.mintermlength = mintermlenght;

if (this.mintermlength < 1) {

this.mintermlength = 1;

}

this.outputformat.setcasetype(hanyupinyincasetype.lowercase);

this.outputformat.settonetype(hanyupinyintonetype.without_tone);

public static boolean containschinese(string s) {

if ((s == null) || ("".equals(s.trim())))

return false;

for (int i = 0; i < s.length(); i++) {

if (ischinese(s.charat(i)))

return true;

return false;

public static boolean ischinese(char a) {

int v = a;

return (v >= 19968) && (v <= 171941);

public final boolean incrementtoken() throws ioexception {

while (true) {

if (this.curtermbuffer == null) {

if (!this.input.incrementtoken()) {

return false;

}

this.curtermbuffer = ((char[]) this.termatt.buffer().clone());

this.curtermlength = this.termatt.length();

}

if (this.outchinese) {

this.outchinese = false;

this.termatt.copybuffer(this.curtermbuffer, 0,

this.curtermlength);

this.outchinese = true;

string chinese = this.termatt.tostring();

if (containschinese(chinese)) {

this.outchinese = true;

if (chinese.length() >= this.mintermlength) {

try {

string chineseterm = getpinyinstring(chinese);

this.termatt.copybuffer(chineseterm.tochararray(), 0,

chineseterm.length());

} catch (badhanyupinyinoutputformatcombination badhanyupinyinoutputformatcombination) {

badhanyupinyinoutputformatcombination.printstacktrace();

}

this.curtermbuffer = null;

return true;

this.curtermbuffer = null;

public void reset() throws ioexception {

super.reset();

private string getpinyinstring(string chinese)

throws badhanyupinyinoutputformatcombination {

string chineseterm = null;

if (this.firstchar) {

stringbuilder sb = new stringbuilder();

for (int i = 0; i < chinese.length(); i++) {

string[] array = pinyinhelper.tohanyupinyinstringarray(

chinese.charat(i), this.outputformat);

if ((array != null) && (array.length != 0)) {

string s = array[0];

char c = s.charat(0);

sb.append(c);

chineseterm = sb.tostring();

} else {

chineseterm = pinyinhelper.tohanyupinyinstring(chinese,

this.outputformat, "");

return chineseterm;

}

import org.apache.lucene.analysis.tokenattributes.offsetattribute;

* 对转换后的拼音进行ngram处理的tokenfilter

public class pinyinngramtokenfilter extends tokenfilter {

public static final boolean default_ngram_chinese = false;

private final int mingram;

private final int maxgram;

/**是否需要对中文进行ngram[默认为false]*/

private final boolean ngramchinese;

private final offsetattribute offsetatt;

private int curgramsize;

private int tokstart;

public pinyinngramtokenfilter(tokenstream input) {

this(input, constant.default_min_gram, constant.default_max_gram, default_ngram_chinese);

public pinyinngramtokenfilter(tokenstream input, int maxgram) {

this(input, constant.default_min_gram, maxgram, default_ngram_chinese);

public pinyinngramtokenfilter(tokenstream input, int mingram, int maxgram) {

this(input, mingram, maxgram, default_ngram_chinese);

public pinyinngramtokenfilter(tokenstream input, int mingram, int maxgram,

boolean ngramchinese) {

this.offsetatt = ((offsetattribute) addattribute(offsetattribute.class));

if (mingram < 1) {

throw new illegalargumentexception(

"mingram must be greater than zero");

if (mingram > maxgram) {

"mingram must not be greater than maxgram");

this.mingram = mingram;

this.maxgram = maxgram;

this.ngramchinese = ngramchinese;

if ((!this.ngramchinese)

&& (containschinese(this.termatt.tostring()))) {

this.curgramsize = this.mingram;

this.tokstart = this.offsetatt.startoffset();

if (this.curgramsize <= this.maxgram) {

if (this.curgramsize >= this.curtermlength) {

clearattributes();

this.offsetatt.setoffset(this.tokstart + 0, this.tokstart

+ this.curtermlength);

this.termatt.copybuffer(this.curtermbuffer, 0,

this.curtermlength);

int start = 0;

int end = start + this.curgramsize;

clearattributes();

this.offsetatt.setoffset(this.tokstart + start, this.tokstart

+ end);

this.termatt.copybuffer(this.curtermbuffer, start,

this.curgramsize);

this.curgramsize += 1;

this.curtermbuffer = null;

import java.io.bufferedreader;

import java.io.reader;

import java.io.stringreader;

import org.apache.lucene.analysis.analyzer;

import org.apache.lucene.analysis.tokenizer;

import org.apache.lucene.analysis.core.lowercasefilter;

import org.apache.lucene.analysis.core.stopanalyzer;

import org.apache.lucene.analysis.core.stopfilter;

import org.wltea.analyzer.lucene.iktokenizer;

* 自定义拼音分词器

public class pinyinanalyzer extends analyzer {

private int mingram;

private int maxgram;

private boolean usesmart;

public pinyinanalyzer() {

super();

this.maxgram = constant.default_max_gram;

this.mingram = constant.default_min_gram;

this.usesmart = constant.default_ik_use_smart;

public pinyinanalyzer(boolean usesmart) {

this.usesmart = usesmart;

public pinyinanalyzer(int maxgram) {

public pinyinanalyzer(int maxgram,boolean usesmart) {

public pinyinanalyzer(int mingram, int maxgram,boolean usesmart) {

@override

protected tokenstreamcomponents createcomponents(string fieldname) {

reader reader = new bufferedreader(new stringreader(fieldname));

tokenizer tokenizer = new iktokenizer(reader, usesmart);

//转拼音

tokenstream tokenstream = new pinyintokenfilter(tokenizer,

constant.default_first_char, constant.default_min_term_lrngth);

//对拼音进行ngram处理

tokenstream = new pinyinngramtokenfilter(tokenstream, this.mingram, this.maxgram);

tokenstream = new lowercasefilter(tokenstream);

tokenstream = new stopfilter(tokenstream,stopanalyzer.english_stop_words_set);

return new analyzer.tokenstreamcomponents(tokenizer, tokenstream);

package com.yida.framework.lucene5.pinyin.test;

import com.yida.framework.lucene5.pinyin.pinyinanalyzer;

import com.yida.framework.lucene5.util.analyzerutils;

* 拼音分词器测试

public class pinyinanalyzertest {

public static void main(string[] args) throws ioexception {

string text = "2011年3月31日,孙燕姿与相恋5年多的男友纳迪姆在新加坡登记结婚";

analyzer analyzer = new pinyinanalyzer(20);

analyzerutils.displaytokens(analyzer, text);

import org.apache.lucene.document.document;

import org.apache.lucene.document.field.store;

import org.apache.lucene.document.textfield;

import org.apache.lucene.index.directoryreader;

import org.apache.lucene.index.indexreader;

import org.apache.lucene.index.indexwriter;

import org.apache.lucene.index.indexwriterconfig;

import org.apache.lucene.index.term;

import org.apache.lucene.search.indexsearcher;

import org.apache.lucene.search.query;

import org.apache.lucene.search.scoredoc;

import org.apache.lucene.search.termquery;

import org.apache.lucene.search.topdocs;

import org.apache.lucene.store.directory;

import org.apache.lucene.store.ramdirectory;

* 拼音搜索测试

public class pinyinsearchtest {

public static void main(string[] args) throws exception {

string fieldname = "content";

string querystring = "sunyanzi";

directory directory = new ramdirectory();

analyzer analyzer = new pinyinanalyzer();

indexwriterconfig config = new indexwriterconfig(analyzer);

indexwriter writer = new indexwriter(directory, config);

/****************创建测试索引begin********************/

document doc1 = new document();

doc1.add(new textfield(fieldname, "孙燕姿,新加坡籍华语流行音乐女歌手,刚出道便被誉为华语“四小天后”之一。", store.yes));

writer.adddocument(doc1);

document doc2 = new document();

doc2.add(new textfield(fieldname, "1978年7月23日,孙燕姿出生于新加坡,祖籍中国广东省潮州市,父亲孙耀宏是新加坡南洋理工大学电机系教授,母亲是一名教师。姐姐孙燕嘉比燕姿大三岁,任职新加坡巴克莱投资银行副总裁,妹妹孙燕美小六岁,是新加坡国立大学医学硕士,燕姿作为家中的第二个女儿,次+女=姿,故取名“燕姿”", store.yes));

writer.adddocument(doc2);

document doc3 = new document();

doc3.add(new textfield(fieldname, "孙燕姿毕业于新加坡南洋理工大学,父亲是燕姿音乐的启蒙者,燕姿从小热爱音乐,五岁开始学钢琴,十岁第一次在舞台上唱歌,十八岁写下第一首自己作词作曲的歌《someone》。", store.yes));

writer.adddocument(doc3);

document doc4 = new document();

doc4.add(new textfield(fieldname, "华纳音乐于2000年6月9日推出孙燕姿的首张音乐专辑《孙燕姿同名专辑》,孙燕姿由此开始了她的音乐之旅。", store.yes));

writer.adddocument(doc4);

document doc5 = new document();

doc5.add(new textfield(fieldname, "2000年,孙燕姿的首张专辑《孙燕姿同名专辑》获得台湾地区年度专辑销售冠军,在台湾卖出30余万张的好成绩,同年底,发行第二张专辑《我要的幸福》", store.yes));

writer.adddocument(doc5);

document doc6 = new document();

doc6.add(new textfield(fieldname, "2011年3月31日,孙燕姿与相恋5年多的男友纳迪姆在新加坡登记结婚", store.yes));

writer.adddocument(doc6);

//强制合并为1个段

writer.forcemerge(1);

writer.close();

/****************创建测试索引end********************/

indexreader reader = directoryreader.open(directory);

indexsearcher searcher = new indexsearcher(reader);

query query = new termquery(new term(fieldname,querystring));

topdocs topdocs = searcher.search(query,integer.max_value);

scoredoc[] docs = topdocs.scoredocs;

if(null == docs || docs.length <= 0) {

system.out.println("no results.");

return;

//打印查询结果

system.out.println("id[score]\tcontent");

for (scoredoc scoredoc : docs) {

int docid = scoredoc.doc;

document document = searcher.doc(docid);

string content = document.get(fieldname);

float score = scoredoc.score;

system.out.println(docid + "[" + score + "]\t" + content);

我只贴出了比较核心的几个类,至于关联的其他类,请你们下载底下的附件再详细的看吧。拼音搜索就说这么多了,如果你还有什么问题,请qq上联系我(qq:7-3-6-0-3-1-3-0-5),或者加我的java技术群跟我们一起交流学习,我会非常的欢迎的。群号:

转载:http://iamyida.iteye.com/blog/2207080