準備工作

- 準備四台機器,基本資訊如下:

| IP | hostname | Role | OS | Memery |

|---|---|---|---|---|

| 192.168.242.136 | k8smaster | Kubernetes master 節點 | CentOS 7.2 | 3G |

| 192.168.242.137 | k8snode1 | Kubernetes node 節點 | 2G | |

| 192.168.242.138 | k8snode2 | |||

| 192.168.242.139 | k8snode3 |

- 設定master節點到node節點的免密登入,具體方法請參考 這裡

-

每台機器【/etc/hosts】檔案需包含:

192.168.242.136 k8smaster

192.168.242.137 k8snode1

192.168.242.138 k8snode2

192.168.242.139 k8snode3

CentOS修改機器名參考

- 每台機器預裝【docker 17.03.2-ce】,安裝步驟參考

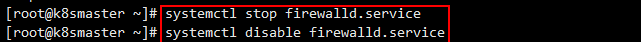

- 關閉所有機器防火牆

systemctl stop firewalld.service

systemctl disable firewalld.service

關閉防火牆.png

- 所有機器關閉selinux,使容器能夠通路到主控端檔案系統

vim /etc/selinux/config

将【SELINUX】設定為【disabled】

關閉selinux.png

臨時關閉selinux

setenforce 0

-

配置系統路由參數,防止kubeadm報路由警告

在【/etc/sysctl.d/】目錄下建立一個Kubernetes的配置檔案【kubernetes.conf】,并寫入如下内容:

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

kubernetes.conf.png

運作如下指令使配置生效

sysctl --system

生效配置.png

【注意】我這裡是新增了一個配置檔案,而不是直接寫到檔案【/etc/sysctl.conf】中,是以生效配置的指令參數是【--system】,如果是直接寫到檔案【/etc/sysctl.conf】中,那麼生效指令的參數是【-p】。

-

關閉虛拟記憶體

修改配置檔案【/etc/fstab】

vim /etc/fstab

注釋掉swap那一行

image.png

然後通過指令臨時關閉虛拟記憶體

swapoff -a

如果不關閉swap,就會在kubeadm初始化Kubernetes的時候報錯

[ERROR Swap]: running with swap on is not supported. Please disable swap

ERROR Swap

-

準備鏡像

我是參考的

這篇部落格 進行搭建的,是以我這裡的鏡像都是從該部落格提供的位址下載下傳的,将鏡像壓縮包上傳到各節點。

鏡像壓縮包.png

使用解壓指令解壓

tar -jxvf k8s_images.tar.bz2

安裝包.png

然後導入鏡像

docker load -i /usr/local/k8s_images/docker_images/etcd-amd64_v3.1.10.tar

docker load -i /usr/local/k8s_images/docker_images/flannel:v0.9.1-amd64.tar

docker load -i /usr/local/k8s_images/docker_images/k8s-dns-dnsmasq-nanny-amd64_v1.14.7.tar

docker load -i /usr/local/k8s_images/docker_images/k8s-dns-kube-dns-amd64_1.14.7.tar

docker load -i /usr/local/k8s_images/docker_images/k8s-dns-sidecar-amd64_1.14.7.tar

docker load -i /usr/local/k8s_images/docker_images/kube-apiserver-amd64_v1.9.0.tar

docker load -i /usr/local/k8s_images/docker_images/kube-controller-manager-amd64_v1.9.0.tar

docker load -i /usr/local/k8s_images/docker_images/kube-proxy-amd64_v1.9.0.tar

docker load -i /usr/local/k8s_images/docker_images/kube-scheduler-amd64_v1.9.0.tar

docker load -i /usr/local/k8s_images/docker_images/pause-amd64_3.0.tar

docker load -i /usr/local/k8s_images/kubernetes-dashboard_v1.8.1.tar

路徑請按照鏡像解壓路徑填寫,全部導入成功後通過指令【docker images】可檢視到導入成功的鏡像。

鏡像.png

到這裡,前期的準備工作就全部完成了,下面就要開始安裝了。

搭建Kubernetes叢集

- 在所有節點上部署socat、kubernetes-cni、kubelet、kubectl、kubeadm。

rpm -ivh /usr/local/k8s_images/socat-1.7.3.2-2.el7.x86_64.rpm

rpm -ivh /usr/local/k8s_images/kubernetes-cni-0.6.0-0.x86_64.rpm /usr/local/k8s_images/kubelet-1.9.9-9.x86_64.rpm

rpm -ivh /usr/local/k8s_images/kubectl-1.9.0-0.x86_64.rpm

rpm -ivh /usr/local/k8s_images/kubeadm-1.9.0-0.x86_64.rpm

install kubelet/kubectl/kubeadm.png

接着修改kubelet的配置檔案

vim /etc/systemd/system/kubelet.service.d/10-kubeadm.conf

kubelet config

kubelet的【cgroup-driver】需要和docker的保持一緻,通過指令【docker info】可以檢視docker的【Cgroup Driver】屬性值。

docker info

這裡可以看到docker的【Cgroup Driver】是【cgroupfs】,是以這裡需要将kubelet的【cgroup-driver】也修改為【cgroupfs】。

修改完成後重載配置檔案

systemctl daemon-reload

設定kubelet開機啟動

systemctl enable kubelet

-

配置master節點

2.1 初始化Kubernetes

kubeadm init --kubernetes-version=v1.9.0 --pod-network-cidr=10.244.0.0/16

kubernetes預設支援多重網絡插件如flannel、weave、calico,這裡使用flanne,就必須要設定【--pod-network-cidr】參數,10.244.0.0/16是kube-flannel.yml裡面配置的預設網段,這裡的【--pod-network-cidr】參數要和【kube-flannel.yml】檔案中的【Network】參數對應。

kube-flannel.yml

初始化輸入如下:

[root@k8smaster ~]# kubeadm init --kubernetes-version=v1.9.0 --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.9.0

[init] Using Authorization modes: [Node RBAC]

[preflight] Running pre-flight checks.

[WARNING FileExisting-crictl]: crictl not found in system path

[preflight] Starting the kubelet service

[certificates] Generated ca certificate and key.

[certificates] Generated apiserver certificate and key.

[certificates] apiserver serving cert is signed for DNS names [k8smaster kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.242.136]

[certificates] Generated apiserver-kubelet-client certificate and key.

[certificates] Generated sa key and public key.

[certificates] Generated front-proxy-ca certificate and key.

[certificates] Generated front-proxy-client certificate and key.

[certificates] Valid certificates and keys now exist in "/etc/kubernetes/pki"

[kubeconfig] Wrote KubeConfig file to disk: "admin.conf"

[kubeconfig] Wrote KubeConfig file to disk: "kubelet.conf"

[kubeconfig] Wrote KubeConfig file to disk: "controller-manager.conf"

[kubeconfig] Wrote KubeConfig file to disk: "scheduler.conf"

[controlplane] Wrote Static Pod manifest for component kube-apiserver to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[controlplane] Wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[controlplane] Wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

[init] Waiting for the kubelet to boot up the control plane as Static Pods from directory "/etc/kubernetes/manifests".

[init] This might take a minute or longer if the control plane images have to be pulled.

[apiclient] All control plane components are healthy after 44.002305 seconds

[uploadconfig] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[markmaster] Will mark node k8smaster as master by adding a label and a taint

[markmaster] Master k8smaster tainted and labelled with key/value: node-role.kubernetes.io/master=""

[bootstraptoken] Using token: abb43a.62186b817d71bcd2

[bootstraptoken] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstraptoken] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstraptoken] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstraptoken] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: kube-dns

[addons] Applied essential addon: kube-proxy

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join --token abb43a.62186b817d71bcd2 192.168.242.136:6443 --discovery-token-ca-cert-hash sha256:6a7625aa2928085fde84cfd918398408771dfe6af5c88c73b2d47527a00a8dad

将【 kubeadm join --token xxxx】這段記下來,加入node節點需要用到這個令牌,如果忘記了可以使用如下指令檢視

kubeadm token list

檢視token

令牌的時效性是24個小時,如果過期了可以使用如下指令建立

kubeadm token create

2.2 配置環境變量

此時root使用者還不能使用kubelet控制叢集,需要按照以下方法配置環境變量

将資訊寫入bash_profile檔案

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

運作指令立即生效

source ~/.bash_profile

檢視版本測試下

kubectl version

version

2.3 安裝flannel

直接使用離線包裡面的【kube-flannel.yml】

kubectl create -f /usr/local/k8s_images/kube-flannel.yml

安裝flannel

-

配置node節點

使用配置master節點初始化Kubernetes生成的token将3個node節點加入master,參見2.1,分别在每個node節點上運作如下指令:

kubeadm join --token abb43a.62186b817d71bcd2 192.168.242.136:6443 --discovery-token-ca-cert-hash sha256:6a7625aa2928085fde84cfd918398408771dfe6af5c88c73b2d47527a00a8dad

join master

全部加入後就可以到master節點上通過如下指令檢視是否加入成功

kubectl get nodes

檢視節點

kubernetes會在每個node節點建立flannel和kube-proxy的pod,通過如下指令檢視pods

kubectl get pods --all-namespaces

pods

檢視叢集資訊

kubectl cluster-info

kubernetes cluster info

搭建dashboard

在master節點上,直接使用離線包裡面的【kubernetes-dashboard.yaml】來建立

kubectl create -f /usr/local/k8s_images/kubernetes-dashboard.yaml

安裝dashboard

接着設定驗證方式,預設驗證方式有kubeconfig和token,這裡使用basicauth的方式進行apiserver的驗證。

建立【/etc/kubernetes/pki/basic_auth_file】用于存放使用者名、密碼、使用者ID。

admin,admin,2

編輯【/etc/kubernetes/manifests/kube-apiserver.yaml】檔案,添加basic_auth驗證

vim /etc/kubernetes/manifests/kube-apiserver.yaml

添加一行

- --basic_auth_file=/etc/kubernetes/pki/basic_auth_file

kube-apiserver.yaml

重新開機kubelet

systemctl restart kubelet

更新kube-apiserver容器

kubectl apply -f /etc/kubernetes/manifests/kube-apiserver.yaml

update apiserver

接下來給admin使用者授權,k8s1.6後版本都采用RBAC授權模型,預設cluster-admin是擁有全部權限的,将admin和cluster-admin bind這樣admin就有cluster-admin的權限。

先檢視cluster-admin

kubectl get clusterrole/cluster-admin -o yaml

cluster-admin

将admin和cluster-admin綁定

kubectl create clusterrolebinding login-on-dashboard-with-cluster-admin --clusterrole=cluster-admin --user=admin

綁定

然後檢視一下

kubectl get clusterrolebinding/login-on-dashboard-with-cluster-admin -o yaml

現在可以登入試試,在浏覽器中輸入位址【https://192.168.242.136:32666】,這裡需要用Firefox,Chrome由于安全機制通路不了。

Chrome

通過Firefox可以看到如下界面

login

選擇【Basic】認證方式,輸入【/etc/kubernetes/pki/basic_auth_file】檔案中配置的使用者名和密碼登入。

基本身份認證

登入成功可以看到如下界面

dashboard

至此,部署全部完成。