文章目錄

- 模型介紹

- resnet18模型流程

- 總結

- resnet50

- 總結

resnet和resnext的架構基本相同的,這裡先學習下resnet的建構,感覺高度子產品化,很友善。本文算是對

PyTorch源碼解讀之torchvision.modelsResNet代碼的詳細了解,另外,強烈推薦這位大神的PyTorch的教程!

模型介紹

resnet的模型可以直接通過torchvision導入,可以通過pretrained設定是否導入預訓練的參數。

import torchvision

model = torchvision.models.resnet50(pretrained=False) 如果選擇導入,resnet50、resnet101和resnet18等的模型函數十分簡潔并且隻有ResNet的參數不同,隻是需要導入預訓練參數時,調用

load_state_dict

加載

model_zoo.load_url

下載下傳的參數,這裡

model_urls

是一個維護不同模型參數下載下傳位址的字典。

def resnet18(pretrained=False, **kwargs):

"""Constructs a ResNet-18 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(BasicBlock, [2, 2, 2, 2], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet18']))

return model

def resnet50(pretrained=False, **kwargs):

"""Constructs a ResNet-50 model.

Args:

pretrained (bool): If True, returns a model pre-trained on ImageNet

"""

model = ResNet(Bottleneck, [3, 4, 6, 3], **kwargs)

if pretrained:

model.load_state_dict(model_zoo.load_url(model_urls['resnet50']))

return model

model_urls = {

'resnet18': 'https://download.pytorch.org/models/resnet18-5c106cde.pth',

'resnet34': 'https://download.pytorch.org/models/resnet34-333f7ec4.pth',

'resnet50': 'https://download.pytorch.org/models/resnet50-19c8e357.pth',

'resnet101': 'https://download.pytorch.org/models/resnet101-5d3b4d8f.pth',

'resnet152': 'https://download.pytorch.org/models/resnet152-b121ed2d.pth',

} 接下來我們看下重點,也就是ResNet,ResNet的組成是:基礎子產品Bottleneck/Basicblock,通過_make_layer生成四個的大的layer,然後在forward中排序。

__init__的兩個重要參數,block和layers,block有兩種(Bottleneck/Basicblock),不同模型調用的類不同在resnet50、resnet101、resnet152中調用的是Bottleneck類,而在resnet18和resnet34中調用的是BasicBlock類,在後面我們詳細了解。layers是包含四個元素的清單,每個元素分别是_make_layer生成四個的大的layer的包含的resdual子結構的個數,在resnet50可以看到清單是 [3, 4, 6, 3]。

_make_layer包含四個參數,第一個參數是block的類型,第二個參數planes是輸出的channel數,第三個參數blocks每個blocks中包含多少個residual子結構,也就是上述清單layers所存儲的數字,第四個參數為步長。

class ResNet(nn.Module):

def __init__(self, block, layers, num_classes=1000):

self.inplanes = 64

super(ResNet, self).__init__()

self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3,

bias=False)

self.bn1 = nn.BatchNorm2d(64)

self.relu = nn.ReLU(inplace=True)

self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

self.layer1 = self._make_layer(block, 64, layers[0])

self.layer2 = self._make_layer(block, 128, layers[1], stride=2)

self.layer3 = self._make_layer(block, 256, layers[2], stride=2)

self.layer4 = self._make_layer(block, 512, layers[3], stride=2)

self.avgpool = nn.AvgPool2d(7, stride=1)

self.fc = nn.Linear(512 * block.expansion, num_classes)

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n)) # 卷積參數變量初始化

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1) # BN參數初始化

m.bias.data.zero_()

def _make_layer(self, block, planes, blocks, stride=1):

downsample = None

if stride != 1 or self.inplanes != planes * block.expansion:

downsample = nn.Sequential(

nn.Conv2d(self.inplanes, planes * block.expansion,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(planes * block.expansion),

)

layers = []

layers.append(block(self.inplanes, planes, stride, downsample))

self.inplanes = planes * block.expansion

for i in range(1, blocks):

layers.append(block(self.inplanes, planes))

return nn.Sequential(*layers)

def forward(self, x):

x = self.conv1(x)

x = self.bn1(x)

x = self.relu(x)

x = self.maxpool(x)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.fc(x)

return x 接下來我們看下兩種block:Bottleneck/Basicblock,他們最重要的是resdual的結構。所有的模型都繼承

torch.nn.Module

,bottleneck改寫了__init__和forward(),forward()中的

out += residual

就是element-wise add的操作。Bottleneck需要了解的有兩處:expansion=4和downsample(下采樣)。關于下采樣的理論我也不清楚,我們後面直接通過代碼來了解吧。

class Bottleneck(nn.Module):

expansion = 4

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(Bottleneck, self).__init__()

self.conv1 = nn.Conv2d(inplanes, planes, kernel_size=1, bias=False)

self.bn1 = nn.BatchNorm2d(planes)

self.conv2 = nn.Conv2d(planes, planes, kernel_size=3, stride=stride,

padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(planes)

self.conv3 = nn.Conv2d(planes, planes * 4, kernel_size=1, bias=False)

self.bn3 = nn.BatchNorm2d(planes * 4)

self.relu = nn.ReLU(inplace=True)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

out = self.relu(out)

out = self.conv3(out)

out = self.bn3(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out Basicblock的resdual包含兩個卷積層,第一層卷積層的kernel=3。

def conv3x3(in_planes, out_planes, stride=1):

"""3x3 convolution with padding"""

return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,

padding=1, bias=False)

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, inplanes, planes, stride=1, downsample=None):

super(BasicBlock, self).__init__()

self.conv1 = conv3x3(inplanes, planes, stride)

self.bn1 = nn.BatchNorm2d(planes)

self.relu = nn.ReLU(inplace=True)

self.conv2 = conv3x3(planes, planes)

self.bn2 = nn.BatchNorm2d(planes)

self.downsample = downsample

self.stride = stride

def forward(self, x):

residual = x

out = self.conv1(x)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

if self.downsample is not None:

residual = self.downsample(x)

out += residual

out = self.relu(out)

return out resnet18模型流程

resnet調用的Resnet參數是

model = ResNet(BasicBlock, [2, 2, 2, 2], **kwargs)

Resnet – init()

self.layer1之前的變量初始化不難了解,

self.layer1=self._make_layer(block, 64, layers[0])

這裡block=Basicblock,layer[0]=2

執行_make_layer

downsample = None——if條件不滿足,downsample=None

下面建構blocks層Basicblock:

layers=[]——layers.append(Basicblock(64,64,1,downsample=None))

指派輸入channel self.inplanes = planesblock.expansion = 641 = 64

for循環建構剩下的blocks-1個residual,不傳downsample.

self.layer2 執行

self._make_layer(block, 128, layers[1], stride=2)

downsample=None

顯然if條件滿足 downsample=nn.Sequential(nn.Conv2d(64,128, kernel_size=1, stride=2, bias=False), nn.BatchNorm2d(128),

)

layers=[]——layers.append(Basicblock(64,128,2,downsample))

self.inplanes = 128*1=128

for循環建構剩下的blocks-1個residual,不傳dowmsample.

可以看出接下來layer3和layer4與layer2相似,最終構成resnet18.

總結

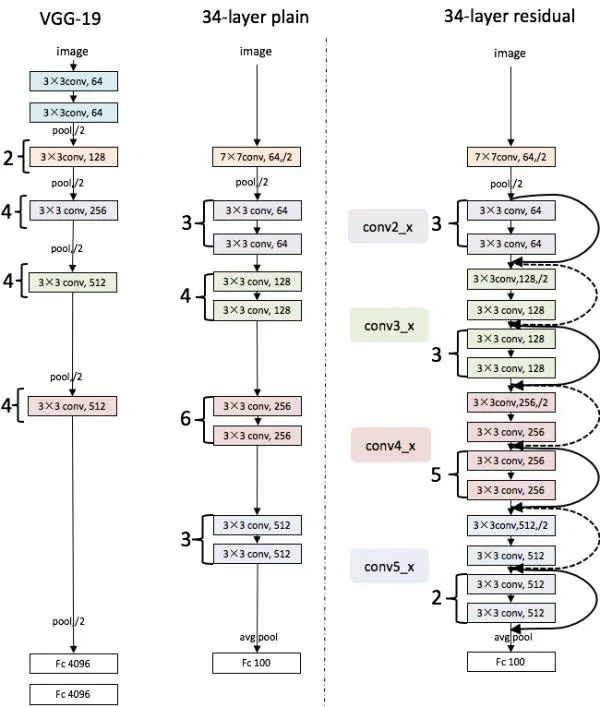

從layer2到layer4,每個layer第一個輸入會增加一倍channel,是以resdual會采用下采樣,而對于每一層而言,channel都是相同的,basicblock.expansion都為1,是以我們看不出其發揮的作用,我們将在resnet50研究下。如下圖,這裡沒找到resnet18,圖中的虛線就是downsample,其産生于channel變化的resdual。

resnet50

model = ResNet(Bottleneck, [3, 4, 6, 3], **kwargs)

,可以看出,resnet50采用Bottleneck子產品,并且每個大的layer的blocks數量也不同。

layer1=self._make_layer(Bottleneck, 64, 3)

if條件滿足,downsample = nn.Sequential(

nn.Conv2d(self.inplanes=64, 64 * 4,

kernel_size=1, stride=stride, bias=False),

nn.BatchNorm2d(644),)

layers.append(Bottleneck(64,64,1,dowmsample)),bottleneck内經過三個卷積層Conv2d(64,64) Conv2d(64,64) Conv2d(64,644)保證每個block的輸出channel是planesexpansion,通過self.inplanes = planesblock.expansion指派後面block的輸入channel也是planes*expansion。