深度學習中常見的幾個基礎概念

1. Linear regression :

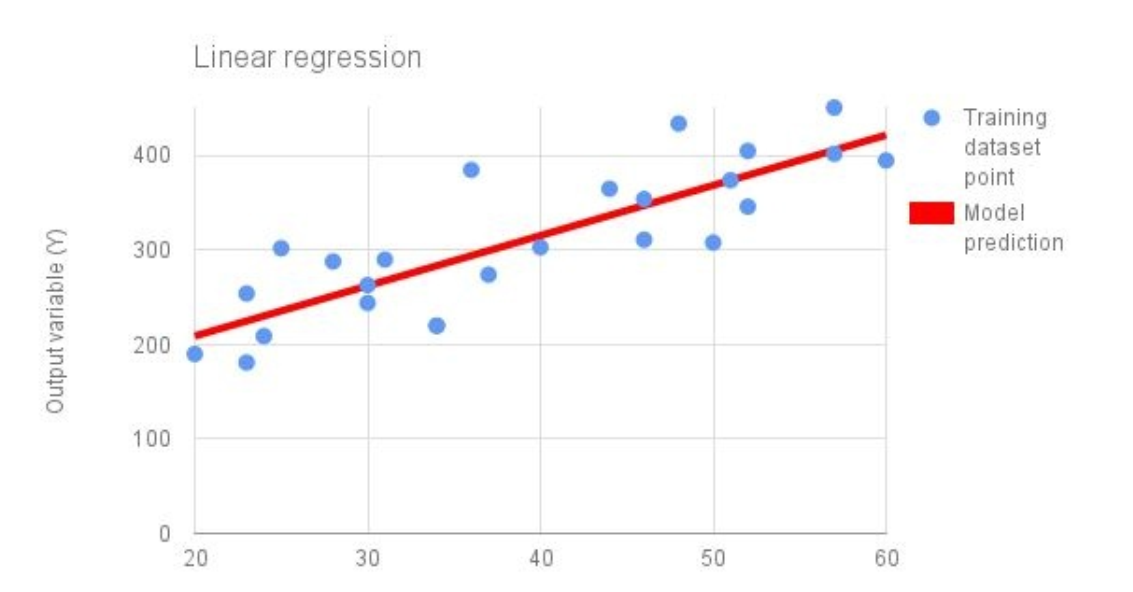

Linear regression 對監督學習問題來說, 是最簡單的模組化形式. 上圖藍色點表示 training data point, 紅色的線表示用于拟合訓練資料的線性函數. 線性函數的總的形式為:

在代碼中表示這個模型, 可以将其定義為 單列的向量 (a single column vector) :

# initialize variable / model parameters.

w = tf.Variable(tf.zeros([2, 1]), name = "weights")

b = tf.Variable(0., name = "bias")

def inference(X):

return tf.matmul(X, W) + b

既然我們已經定義了如果計算 loss , 此處 loss 函數我們設定為 squared error.

$ loss = \sum_{i} (y_i - y_{predicted_i})^2 $

我們統計 i, i 是每一個資料樣本. 代碼上表示為:

def loss (X, Y) :

Y_predicted = inference(X)

return tf.reduce_sum(tf.squared_difference(Y, Y_predicted))

def inputs():

weight_age = [[84, 46], [73, 20], [65, 52], [70, 30], [76, 57], [69, 25], [63, 28], [72, 36], [79

blood_fat_content = [354, 190, 405, 263, 451, 302, 288, 385, 402, 365, 209, 290, 346, 254, 395,

return tf.to_float(weight_age), tf.to_float(blood_fat_content)

我們利用 gradient descent 算法來優化模型的參數 :

def train(tota_loss) :

learning_rate = 0.000001

return tf.train.GradientDescentOptimizer (learning_rate).minimize(total_loss)

當你運作之後, 你會發現随着訓練步驟的進行, 展示的 loss 會逐漸的降低.

def evaluate(sess, X, Y):

print sess.run(inference([[80., 25.]])) # ~ 303

print sess.run(inference([[65., 25.]])) # ~ 256

Logistic regression.

線性回歸模型預測的是一個連續的數字 (continuous value) , 或者其他任何 real number. 我們接下來會提供一個可以回答 yes-or-no 問題的模型, 例如 : " Is this email spam ? "

有一個在機器學習領域被常用的一個模型, 稱為: logistic function. 也被稱為 sigmoid function, 形狀像 S .

$ f(x) = 1/(1+e^{-x}) $

這裡你看到了一個 logistic / sigmoid function 的圖, 像 "S" 形狀.

這個函數将 single input value 作為輸入. 為了給這個函數輸入多元, 或者我們訓練資料集樣本的特征 , 我們需要将他們組合為一個 value. 我們可以利用 線性回歸模型 來做這個事情 .

# same params and variable initialization as log reg.

w = tf.Variable(tf.zeros([5, 1]), name = "weights")

b = tf.Variable(0., name = "bias")

# former inference is now used for combing inputs.

def combine_inputs(X) :

return tf.matmul(X, W) + b

# new inferred value is the sigmoid applied to the former.

def inference(X) :

return tf.sigmoid (combine_inputs(X))

這種問題 交叉熵損失函數解決的比較好.

我們可以視覺上比較 兩個損失函數的表現, 根據預測的輸出.

def loss (X, Y) :

return tf.reduce_mean (tf.sigmoid_cross_entropy_with_logits (combine_inputs(X), Y)

What "Cross-entropy" means :

加載資料 :

def read_csv (batch_size, file_name, record_defaults) :

filename_queue = tf.train.string_input_producer([os.path.dirname(__file__) + "/" + file_name])

reader = tf.TextLineReader (skip_header_lines = 1)

key, value = reader.read(filename_queue)

# decode_csv will convert a Tensor from type string (the text line) in

# a tuple of tensor columns with the specified defaults, which also sets the data type for each column .

decoded = tf.decode_csv(value, record_defaults = record_defaults)

# batch actually reads the file and loads "batch_size" rows in a single tensor

return tf.train.shuffle_batch(decoded, batch_size=batch_size, capacity = batch_size * 50, min_after_dequeue = batch_size)

def inputs ():

passenger_id, survived, pclass, name, sex, age, sibsp, parch, ticket, fare, cabin, embarked = \

read_csv (100, "train.csv", [[0.0], [0.0]] ........)

# convert categorical data .

is_first_class = tf.to_float (tf.equal(pclass, [1]))

is_second_class = tf.to_float (tf.equal(pclass, [2]))

is_third_class = tf.to_float (tf.equal (pclass, [3]))

gender = tf.to_float (tf.equal (sex, ["female"]))

# Finally we pack all the features in a single matrix ;

# We then trainspose to have a matrix with one example per row and one feature per column.

features = tf.transpose (tf.pack([is_first_class, is_second_class, is_third_class, gender, age]))

survived = tf.reshape(survived, [100, 1])

return features, survived